Scale AI Solved the Right Problem at the Right Moment. The Meta Deal Changed Everything About What the Brand Has to Say Next.

In 2016, Alexandr Wang was nineteen years old and had recently left MIT.

He had worked as a machine learning engineer at Quora , where he solved AI problems PhD holders struggled with. He was watching the AI boom from inside the engineering rooms.

And then he saw something that almost nobody else was building for.

The AI models of the mid-2010s were not failing because the algorithms were wrong. They were failing because they had no data. Accurately labeled, structured, human-verified data at the scale that training a real-world AI system requires. Autonomous vehicles needed millions of labeled images to learn to detect pedestrians at dusk. Content moderation systems needed labeled examples of harm across every language and context. Language models needed annotated outputs to learn what good answers looked like.

Wang described the insight simply: “Data is going to be one of those hurdles.” He built an API that sent raw data and returned it labeled. He called it Scale AI .

What followed is now infrastructure history.

Scale trained the models that trained the models. OpenAI, Google, Microsoft, Meta, and the US Department of Defense became customers. By 2024, the company was generating close to $1 billion in revenue. By June 2025, Meta had invested $14.3 billion for a 49% stake, Wang had departed to lead Meta’s Superintelligence Labs, and OpenAI and Google had paused their Scale relationships over data confidentiality concerns.

Scale AI is now a different company from the one Wang built.

This analysis examines both.

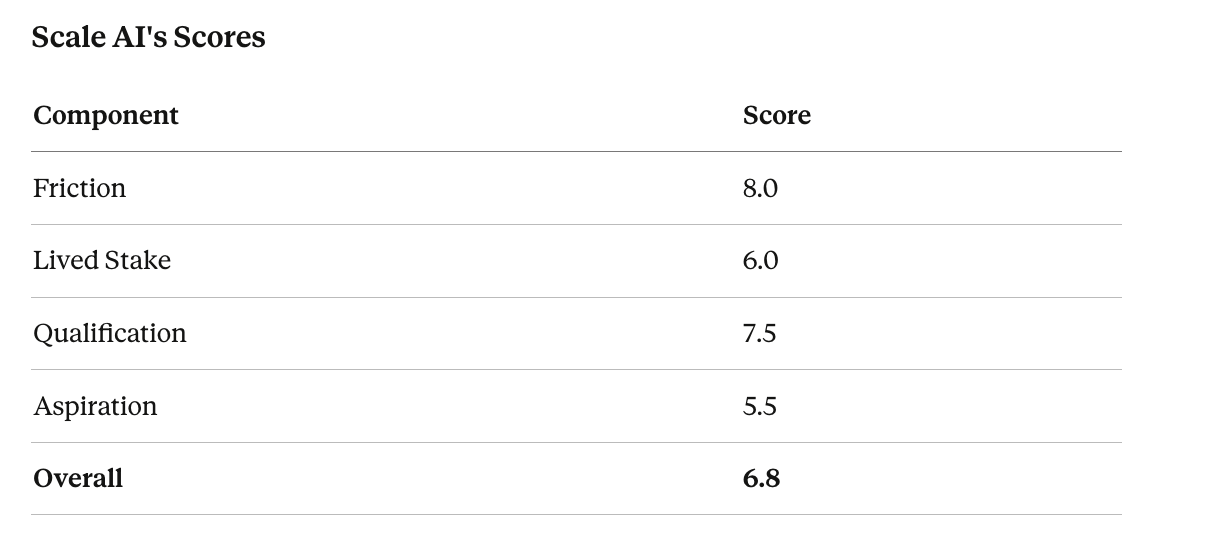

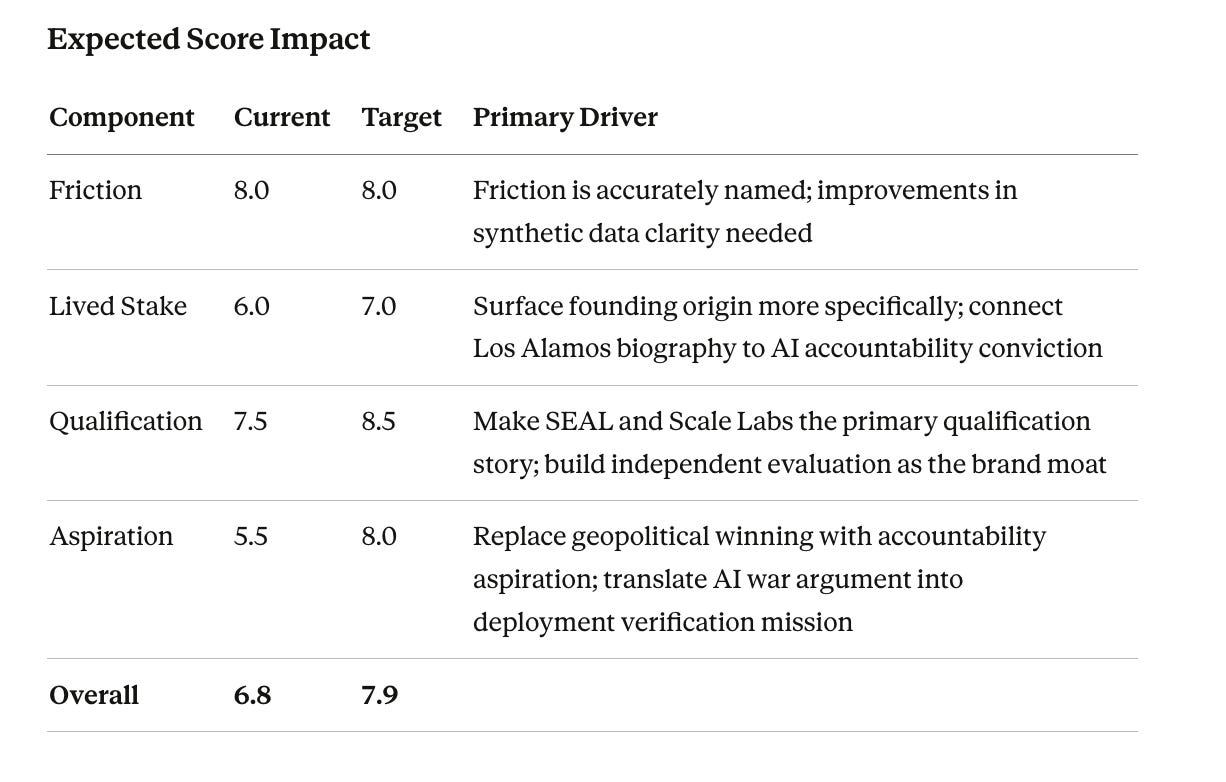

I ran Scale AI through my four-component Narrative Authenticity Framework. It scores 6.8 overall. The founding friction was correct and prescient. The qualification built over nine years of data infrastructure is real. The lived stake is observer-derived rather than participant-derived. And the aspiration, post-Meta, is the most unresolved brand question in enterprise AI.

The Framework

I score companies on four components, each out of 10:

Friction names the problem everyone feels but nobody has fixed.

Lived Stake measures authentic proximity to that problem. Did the founders live inside it, or observe it from the outside?

Distinctive Qualification identifies the structural insight or non-obvious advantage that makes this company the right one to solve it.

Real Aspiration describes the specific transformed future state the company is building toward. Not a mission statement. A world that actually changes.

A Note on Moment

Scale AI is analyzed at a uniquely unstable moment in its history. The founder who defined the brand departed in June 2025. Its largest commercial customers, OpenAI and Google, paused their relationships immediately after the Meta deal. The company lost its perceived neutrality as a data infrastructure provider to the AI industry at large and has since been rebuilding its customer base and identity.

This analysis evaluates Scale AI on the strength of what it built and the narrative position it occupied through 2025, while addressing directly what the Meta deal means for the brand going forward.

Friction: 8.0

The data-bottleneck insight that led to Scale AI was correct before anyone believed it and has been validated by every major development in AI since.

In 2016, most of the AI industry focused on algorithms and computing. The field was captivated by the breakthroughs in architecture that had produced AlphaGo and the early language models. Data was assumed to be a solvable problem, a detail that infrastructure and engineering teams would figure out. Wang saw something different. He saw that data was not a detail. It was the bottleneck. The AI models of the era were hungry for labeled examples at a scale that no existing workflow could produce efficiently, accurately, or at a cost that would allow companies to train at the level they needed.

The autonomous vehicle sector made the friction visible first. A self-driving car system needs to recognize pedestrians, cyclists, construction workers, emergency vehicles, and road debris under every lighting condition, weather pattern, and country’s traffic conventions. Each of those recognition tasks requires thousands to millions of labeled images before the model develops a reliable judgment.

No algorithm elegance substitutes for labeled data at that volume. Scale built the pipeline.

The friction has evolved and deepened as AI has advanced. The reinforcement learning from human feedback that made ChatGPT legible and useful to non-experts required human annotators to rate outputs, identify errors, and train models toward better responses. The red-teaming that AI safety evaluation requires, systematically probing frontier models for vulnerabilities, biases, and failure modes, requires human judgment that automated testing cannot replicate. Scale’s Safety, Evaluation, and Alignment Lab built the infrastructure for both.

The score is 8.0 because the data friction is structurally correct and has remained so across nine years of AI development. Wang’s 2016 insight did not become less true when the models got larger. It became more true. The demand for high-quality human-verified training data has grown with every capability leap, not shrunk.

The score stops at 8.0 rather than reaching the highest marks in this series because the friction, while real, has been complicated by the emergence of synthetic data generation and by some frontier labs moving training data production in-house. The data bottleneck argument is still largely correct. It is no longer as clean as it was in 2016.

Lived Stake: 6.0

Wang’s founding insight was observer-derived, and the brand has not fully distinguished between observation and participation.

Wang grew up in Los Alamos, New Mexico, the birthplace of the atomic bomb, with Chinese immigrant parents who were physicists who worked at the National Laboratory. The environment produced a genuine orientation toward high-stakes, consequential technology. He came of age understanding that what researchers build in laboratories shapes what the world becomes.

At MIT and at Quora, he observed AI development from inside the engineering rooms. He saw the data problem not because he was trying to train a model and failing, but because he was an unusually perceptive observer of the workflow that other engineers were struggling with. The insight “data is the bottleneck” came from watching other people hit that bottleneck, not from having hit it himself while trying to build something specific.

That is a real and valuable form of insight.

It is the insight of someone who is unusually good at identifying invisible structural problems before they become visible to the broader market. At nineteen, Wang had the pattern recognition of someone much older in the field. The willingness to drop out of MIT and build what he saw missing, before there was a category for it, before venture capital understood why it mattered, is genuinely admirable.

What it is not is the lived stake of someone who needed this product to exist because something had failed them directly. The highest lived stake scores in this series, Patagonia, Patreon, and Tempus, are driven by founders who were the problem, not the person who observed it. Wang observed the problem with extraordinary clarity. He did not live inside it.

The score is 6.0 because the observation-derived insight is real, genuine, and prescient. The gap to a higher score is the same gap visible in other observer-derived companies in this series: the brand asks for a level of trust and conviction that is most compellingly earned by founders who were participants in the friction, not observers of it.

The complicating factor post-2025: Wang himself has departed. The lived stake question for Scale AI going forward is a question about the new leadership’s relationship to the founding insight, and whether that insight is still the company’s organizing conviction or whether it has been superseded by the defense and government positioning that Wang developed.

Qualification: 7.5

Scale AI’s qualification was among the strongest in the industry through 2024. The Meta deal changed the calculus significantly.

The pre-Meta qualification case was built on four assets. The depth of the data pipeline, developed across nine years and hundreds of AI model training projects, including some of the most consequential models ever deployed. The SEAL research division, which built proprietary expertise in AI model evaluation, red-teaming, and alignment that no data labeling competitor could match. The defense and government relationships, which represented a combination of security clearances, compliance infrastructure, and institutional trust that took years to build. And the network of clients, which included every major AI lab and most of the major technology companies developing AI applications.

The Meta deal dismantled the most valuable element of that qualification in one announcement.

Scale AI’s commercial model as an independent data infrastructure provider depended on neutrality. Every AI lab and every technology company that trained models on sensitive data needed confidence that their training data, their model evaluation results, and their capability assessments were not visible to their most powerful competitor. When Meta acquired a 49% stake and Wang departed to lead Meta’s AI efforts, that confidence evaporated. OpenAI, Google, and xAI did not pause their Scale relationships because of a contractual provision. They paused them because the trust infrastructure that made Scale’s neutrality credible had collapsed in a single deal.

The qualification that remains is meaningful. The defense and government contracts, including the Thunderforge project with the Department of Defense, represent relationships built on security infrastructure and institutional alignment that the Meta deal did not directly compromise. The Scale Labs research division, expanded in March 2026, represents a genuine pivot toward AI evaluation, benchmarking, and safety infrastructure that has independent value from the data labeling business.

The score is 7.5 rather than higher because the qualification landscape post-Meta is genuinely uncertain. The defense and government positioning is real and growing. The commercial AI lab positioning is damaged and rebuilding. The net of those two trajectories is not yet clear.

Real Aspiration: 5.5

“America must win the AI war” is a political position, not a brand aspiration. It is also the statement that Scale AI committed to most publicly in 2025.

Wang took out a full-page advertisement in The Washington Post in January 2025, addressed directly to President Trump, declaring that America must win the AI war against China. He attended Trump’s inauguration. He testified before Congress. He met with military and defense leaders. He built a brand identity around American AI supremacy in a way that was strategically aligned with Scale’s defense contracts and with Wang’s personal convictions about AI geopolitics.

The aspiration argument behind that positioning is available and partially compelling. Wang grew up at Los Alamos. He understands what it means for a national laboratory to develop transformative technology that shapes geopolitical power. He has a genuine, biographical conviction that the development of AI has strategic implications for national security that most technology leaders either do not have or do not act on. His view that AI trained on different values produces different outcomes, that American AI and Chinese AI are not neutral substitutes for each other, is defensible and well-reasoned.

The problem with “America must win the AI war” as an aspiration is twofold. First, it is explicitly divisive. When Wang made this argument at Web Summit Qatar, an overwhelming majority of the audience disagreed. A brand aspiration that a majority of the global audience actively rejects is not functioning as an aspiration. It is functioning as a political stance. Second, it is incomplete. It names a competitive frame without naming a transformed world. America winning the AI war is not a description of what the world looks like when Scale AI has done its work well. It is a description of a geopolitical outcome that Scale is one input into.

The aspiration that Scale AI could build around, and has not, is something more specific to what data infrastructure actually does. The best AI systems are only as good as the data they are trained on, the human evaluation that catches their errors, and the red-teaming that finds their failure modes before deployment. Scale AI has been the organization responsible for all three at the frontier of AI development for nine years. The aspiration available to that history is one about accountability: a world where AI that affects people’s lives has been verified by human judgment before it is deployed, where the gap between what a model claims to do and what it actually does has been measured and reduced by someone outside the lab that built it.

That aspiration is specific, consequential, and politically portable in a way that “America must win the AI war” is not. It applies equally to American, European, Indian, and Brazilian AI systems. It names a transformed world rather than a geopolitical winner.

The score is 5.5 because the current aspiration is a political position that alienates a significant portion of the global audience Scale serves. The path to a higher score does not require abandoning the conviction about AI geopolitics. It requires embedding that conviction in an aspiration about what accountable AI development looks like, and letting the defense and national security work speak for itself as one expression of that larger argument.

The Central Question: What Is Scale AI, Without Wang?

The most important brand question Scale AI faces in 2026 is one that most companies never have to answer.

Wang was not just the CEO of Scale AI. He was the brand. His youth, his conviction, his policy presence, his willingness to make explicitly political arguments about AI geopolitics, his “wartime CEO” description from inside Meta, his full-page Washington Post advertisement, all of those were brand expressions that lived in a specific person. When that person departed to lead Meta’s superintelligence effort, he did not leave a brand behind. He left a company with a nine-year history in data infrastructure, a defense-sector positioning, and a research division that was only starting to build its own identity.

Jason Droege, the new CEO, is a former Uber executive with a platform and operations background. The company is pivoting toward enterprise AI applications and government contracts as its primary growth vectors. The Scale Labs research division, with its SEAL methodology and Humanity’s Last Exam benchmark, represents the most intellectually interesting brand direction available to the post-Wang company.

The brand rebuilding challenge is not about finding a new personality for Scale. It is about finding the conviction that survives the personality. The data-as-bottleneck insight was Wang’s. But the infrastructure built from that insight, the evaluation methodology developed in SEAL, the defense sector alignment, the research into AI model reliability and risk, those belong to the organization.

The aspiration that could anchor Scale’s brand post-Wang is the evaluation aspiration: that every AI system deployed in consequential contexts should be independently evaluated before deployment, that the gap between lab performance and real-world performance should be measured and published, that the human judgment Scale has been applying to AI for nine years is not a service to AI labs but a check on them.

That is a stronger aspiration than “America must win the AI war” because it is about what Scale does rather than what it wants geopolitically. It is also one that the defense contracts, the SEAL methodology, and the Scale Labs research all support.

Three Strategies to Raise the Score

Strategy One: Build the Evaluation Aspiration as the Post-Wang Brand

Current state: Scale AI has a data-labeling heritage, a defensive positioning, and a nascent research identity within SEAL and Scale Labs. None of these has been assembled into a primary brand aspiration that survives Wang’s departure.

What to do: The most defensible and consequential aspiration available to Scale AI is about independent evaluation. Every frontier AI model is evaluated by the organization that built it. Scale AI has spent nine years building the methodology, the infrastructure, and the organizational independence to evaluate AI from outside. That is the function that makes consequential AI deployment less dangerous. SEAL, Humanity’s Last Exam, and Scale Labs are all expressions of this function.

The aspiration to build around: AI deployed in consequential contexts, medicine, law, defense, government services, should be independently evaluated before it affects people’s lives. Scale has spent nine years building the capacity to do that. The brand should say so.

Tactics:

Build a “Verified AI” brand platform that positions Scale Labs and SEAL as the independent evaluation layer that AI deployment requires. The argument: you wouldn’t approve a drug without independent clinical trials. You shouldn’t deploy AI in consequential contexts without independent capability and safety evaluation. Scale AI is that independent evaluation.

Publish an annual “State of AI Reliability” report using Scale’s own evaluation data, covering benchmark performance versus real-world performance gaps, failure modes by model type, and the categories of deployment where AI evaluation is most frequently underdone.

Strategy Two: Reconnect the Data Infrastructure Heritage to the Evaluation Mission

Current state: Scale AI’s nine-year data labeling history and its SEAL evaluation work are presented as separate business lines rather than as the same conviction applied at two stages of the AI development cycle.

What to do: The data labeling work and the model evaluation work are not different businesses. They are the same belief applied sequentially. You get better AI by ensuring the training data is accurate and verified by human judgment. You then verify the resulting model is behaving as intended through independent evaluation. Both are expressions of the same foundational conviction: that AI systems need human accountability built into their development, not added on afterward.

Tactics:

Build a “Human Accountability Layer” brand narrative that describes Scale’s core function across both its data and evaluation businesses. The argument: the AI systems that matter most, in medicine, in defense, in government, in legal contexts, are the ones where human accountability cannot be removed from the development process. Scale has been the organization responsible for that layer since 2016. Every model it has helped train is more reliable because human judgment verified the data. Every model it has evaluated is more understood because human evaluation measured its real capabilities.

This narrative also handles the neutrality question post-Meta more elegantly than the current approach. Independent evaluation is credible only if the evaluator is genuinely independent. Scale’s defense contracts and government relationships are the demonstration of that independence from commercial AI lab interests. The narrative that builds on that is: Scale evaluates AI for the people who will live with the consequences, not the people who build it.

Strategy Three: Resolve the Geopolitical Aspiration into a Deployability Argument

Current state: “America must win the AI war” is a political position that alienates global customers and functions as a defense sector positioning rather than a brand aspiration.

What to do: The underlying conviction behind the American AI supremacy argument is that AI systems trained on different values produce different outcomes, and that the values embedded in AI training matter as much as the capabilities. That conviction is well-reasoned and has real empirical support. It should be translated into a brand argument about evaluating what values AI systems actually reflect, not a political argument about geopolitical winners.

The translation: the world needs AI evaluation infrastructure that can assess not just whether a model performs its claimed task but whether it reflects the values it claims to embody. Scale’s red-teaming, adversarial testing, and SEAL methodology are the closest thing that exists to independent value verification for AI systems. That work is relevant to every government and enterprise deploying AI, not only those in the US defense context.

Tactics:

Build a “What Does Your AI Actually Believe” thought-leadership program that publishes Scale’s findings from adversarial testing and red-teaming across model categories. The argument: AI systems are trained to claim certain values. Independent evaluation reveals whether those claims are accurate. Scale has been doing that evaluation for the most consequential AI systems for nine years.

This positioning allows Scale to carry the defense sector credibility into commercial AI contexts without alienating global enterprise customers who are not aligned with the American AI supremacy framing.

The comparison that matters most for understanding Scale AI’s brand position is the comparison to Anthropic. Both companies are in the AI safety and accountability space in different ways. Anthropic builds safety into the most powerful AI systems it can build, accepting the risk that those systems exist in exchange for influence over how they develop. Scale AI evaluated those systems from outside, providing independent human judgment on whether they were actually doing what they claimed.

Anthropic scores 8.5 overall. Scale AI scores 6.8. The gap is entirely in lived stake and aspiration. Both companies have the same friction score and similar qualification scores. The difference is that Anthropic’s founders left OpenAI because they believed they had to, and built a company organized around a conviction they held personally. Scale’s founder observed a structural problem and built the infrastructure to address it. Both are valuable. One earns a different kind of trust.

Post-Wang, Scale AI has an opportunity to rebuild its brand around the accountability function that has been at the center of its business from the beginning. The data labeling, the RLHF annotation, the red-teaming, the SEAL evaluation, Scale Labs: all of it is human accountability applied to AI development. The aspiration to build around is that AI deployed in consequential contexts should be accountable to human judgment before it affects human lives. Scale AI has been building the infrastructure for that accountability since 2016.

The brand has not yet said so.

This analysis is part of an ongoing series applying the Narrative Authenticity Framework to company brands. The framework scores four components: Friction, Lived Stake, Distinctive Qualification, and Real Aspiration. Previous analyses include Patagonia, Anthropic, Airbnb, Netflix, Figma, Honeycomb, Stripe, Vanta, Okta, Writer, DigitalOcean, Jasper, Affirm, Shopify, Wasabi, Cato Networks, Nebius, Forge, Patreon, MongoDB, Tempus AI, Neo4j, Global Relay, Moveo AI, Mistral AI, and others.

I am Tim Donovan, founder of SeekArgus and senior marketing and communications advisor at Enterra Solutions. I publish the SeekingAI newsletter on Substack for senior marketing executives at seekargus.substack.com.